About Me

I'm a Robotics M.Eng student at the University of Maryland (Aug 2024 – May 2026) focusing on perception and planning for autonomous robots. My research centers on monocular 3D reconstruction and neural scene representations. Currently, I am a Computer Vision intern at Inception Robotics where I am designing and implementing pipelines for reading printed text (OCR) from complex

medication packaging using computer vision techniques. Previously, I was a graduate research assistant at the Perception and Robotics Group (PRG) where I worked with Dr. Yiannis Aloimonos on neural scene models and multimodal sensor calibration.

I have prior research and internship experience in ML/AI and robotics, including roles at MARMot Lab (NUS) and an ML/AI internship at Clutterbot. I actively open-source projects on GitHub and maintain project write-ups on my portfolio.

Outside of research, I enjoy robotics projects, hitting the gym, hiking, and sports like cricket and badminton.

Skills

- Python

- C

- C++

- MATLAB

- NumPy / SciPy

- Pandas / Matplotlib

- PyTorch / TensorFlow

- OpenCV / Computer Vision

- Reinforcement Learning

- LLMs / Generative Models

- CUDA

- ROS1 / ROS2

- Docker / CI-CD

- Gazebo / MuJoCo / RViz

- AWS / Google Cloud / Azure

Professional Background

Education

University of Maryland, College Park

Aug 2024 - May 2026 | College Park, MD

M.Eng. in Robotics

- CGPA: 3.77/4

- Coursework:

- Perception & Planning for Autonomous Robots

- Robot Modeling and Control

- Building a manufacturing robot software system

- Natural Language Processing

- Multimodal Computer Vision

BITS Pilani, India

Aug 2019 - May 2024 | Pilani, India

B.E. Mechanical Engineering & M.Sc.(Hons.) Physics

- CGPA: 8.3/10

Professional Experience

Computer Vision Intern | Inception Robotics

Jan 2026 - Present

- Fine-tuned Llama-3.2-1B-Instruct model using QLoRA and extracted medication name and dosage attributes from raw OCR text with 96% accuracy.

- Engineered an automated NDC extraction pipeline by integrating a fine-tuned YOLOv5 detector with PyZbar, achieving 87% decoding accuracy across diverse label orientations.

Graduate Research Assistant | Perception & Robotics Group

May 2025 - Dec 2025

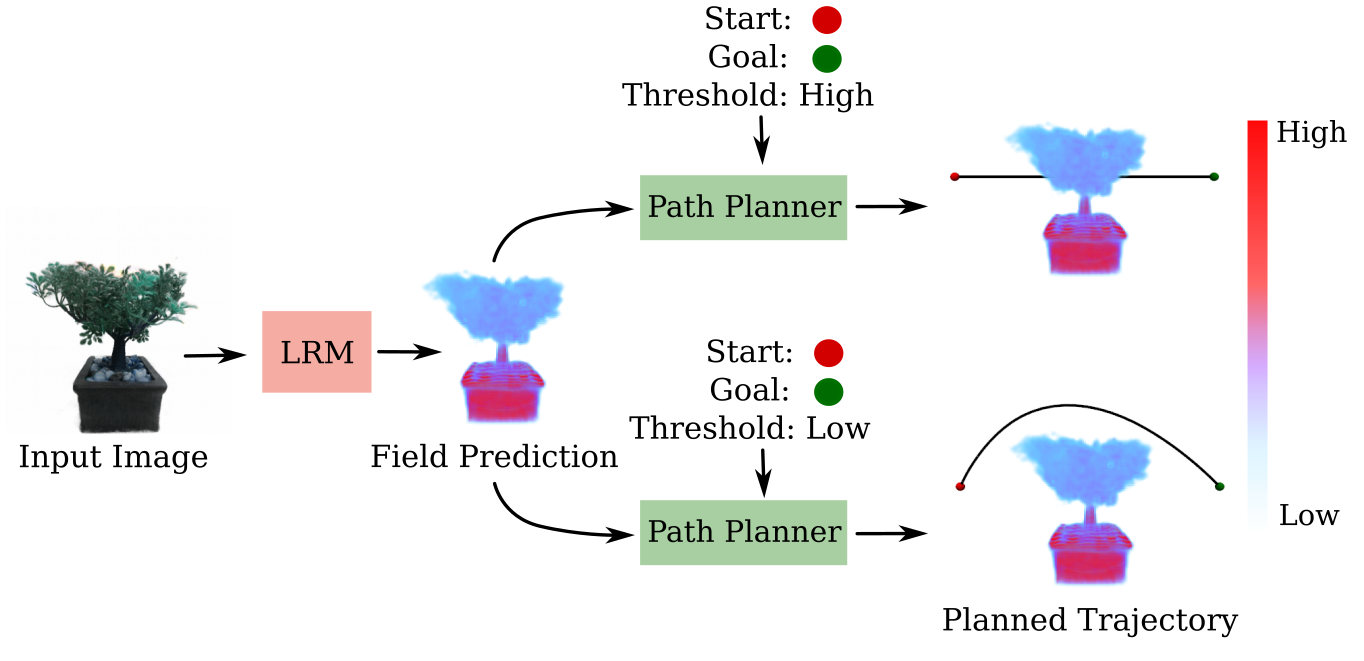

Monocular Reconstruction of Neural Tactile Fields | Advisor: Dr. Yiannis Aloimonos

- Fine-tuned a Large Multi-View Gaussian model for 3D scene density estimation, improving reconstruction accuracy by 22% over baseline NeRF models.

- Built a unified RGB-tactile neural scene representation that improved volumetric IoU reconstruction by 82% on unseen objects.

- Developed a 0.3 mm–precision multimodal calibration pipeline and processed 40 aligned RGB–tactile samples to support training of reconstruction models.

ML/AI Intern | Clutterbot

Dec 2023 - May 2024

Optimizing annotation and segmentation pipelines for mobile robots

- Increased YOLOv8 mAP by 20% using a Cleanlab assessment pipeline to refine dataset quality.

- Improved mean IoU by 25% with a SAM-based auto-annotation pipeline for segmentation masks.

- Ported SeaFormer to a pure PyTorch implementation, reducing training setup time by 30%.

Research Assistant | MARMot Lab, National University of Singapore

Jan 2023 - May 2024

Cooperative multi-agent RL for urban traffic control

- Implemented CTDE-based QMIX variants and improved RL performance for traffic decongestion tasks.

- Reduced average queue length by 30% and cumulative delay by 20% with a normalized gradient entropy loss.

Project Portfolio

Frenet Optimal Trajectory Planner for ADAS

Developed a Frenet-based optimal trajectory and behavioral planner in CARLA using quintic polynomials; implemented dynamic cost-function tuning and feasibility checks for curvature, acceleration, and jerk to achieve 100% collision-free navigation and smooth lane-change maneuvers.

VisionNav: Autonomous Navigation System

Engineered a ROS 2 computer vision and navigation pipeline for TurtleBot4, achieving 95% ArUco-based pose accuracy and 98% YOLOv11 sign detection precision; integrated EKF-based sensor fusion with LIO-SAM and optical flow to ensure robust, real-time collision avoidance.

Publications

Hobbies

I enjoy doing a lot of things. I'm generally a very active person and work out regularly to keep myself fit. I like playing badminton, cricket, and chess. I also have a passion for singing and have also performed live on stage on multiple occasions.

The video attached was my live performance on the famous patriotic song Sandese Aate Hain at the Hoff theatre, Stamp Student Union during the Indian indepedence day celebration at UMD.